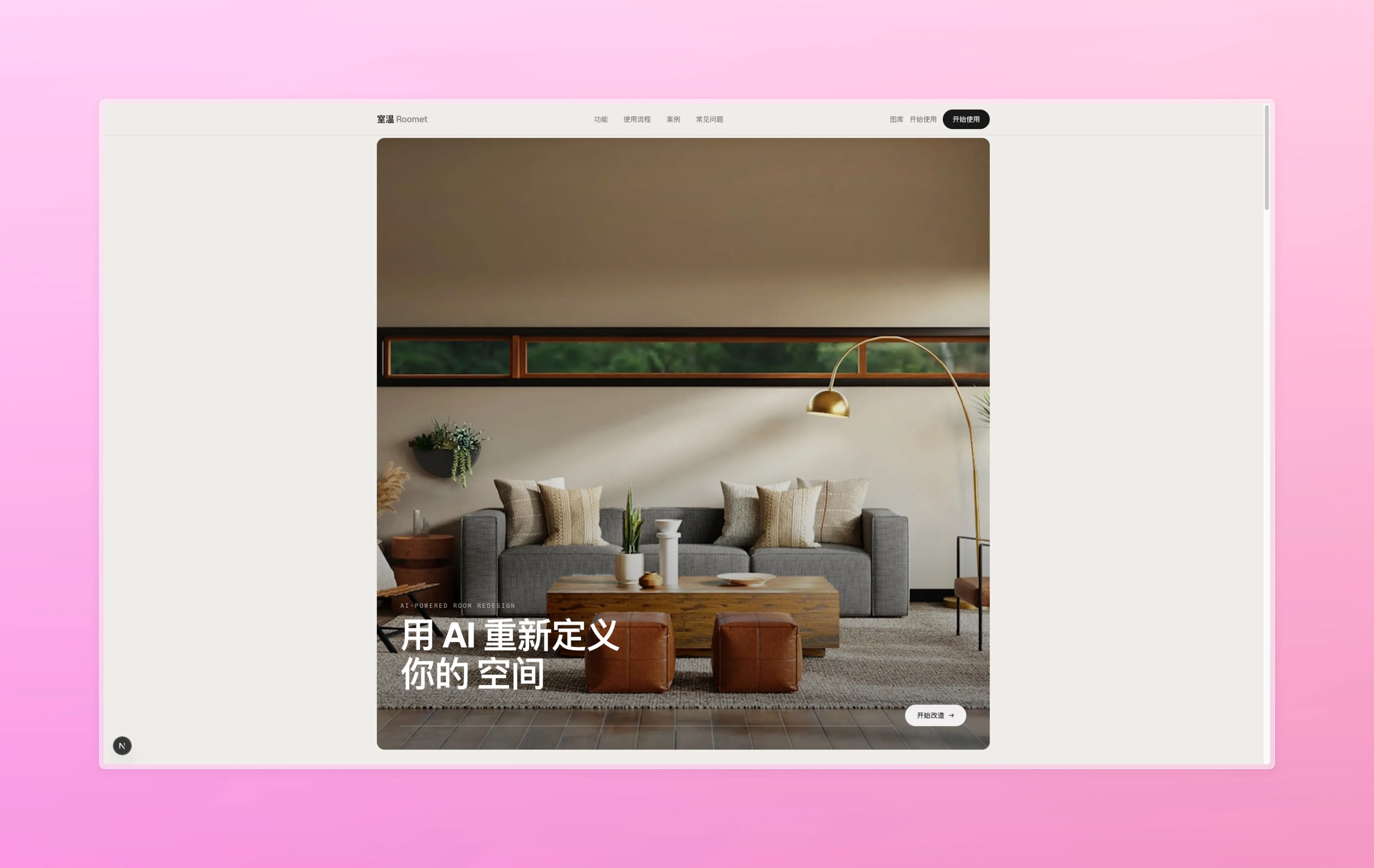

Roomet

Upload a photo of your room, pick a style, and AI re-imagines the space. Generate three variants, pick one, then edit it furniture-by-furniture until it's exactly what you want.

- Type

- Web

- Role

- Solo

- Status

- Active

- Tech

- Next.js 15 React 19 TypeScript Tailwind v4 FastAPI Gemini 3 Pro Image YOLO-World arq / Redis Supabase PostgreSQL

- Started

- Feb 2026

Point Roomet at a photo of a room and it gives you back three AI-generated re-designs in your chosen style. Pick one, then keep editing furniture-by-furniture — swap the couch, change the rug, repaint the walls — until you have the exact room you wanted. Every version is tracked so you can always go back.

What it does

Upload the photo on the left, describe the vibe, and Roomet gives you the three variants below. Each one is a fully re-rendered scene keeping the room’s bones (windows, doorways, floor plan) but letting the style, furniture, and palette go wherever the prompt takes them.

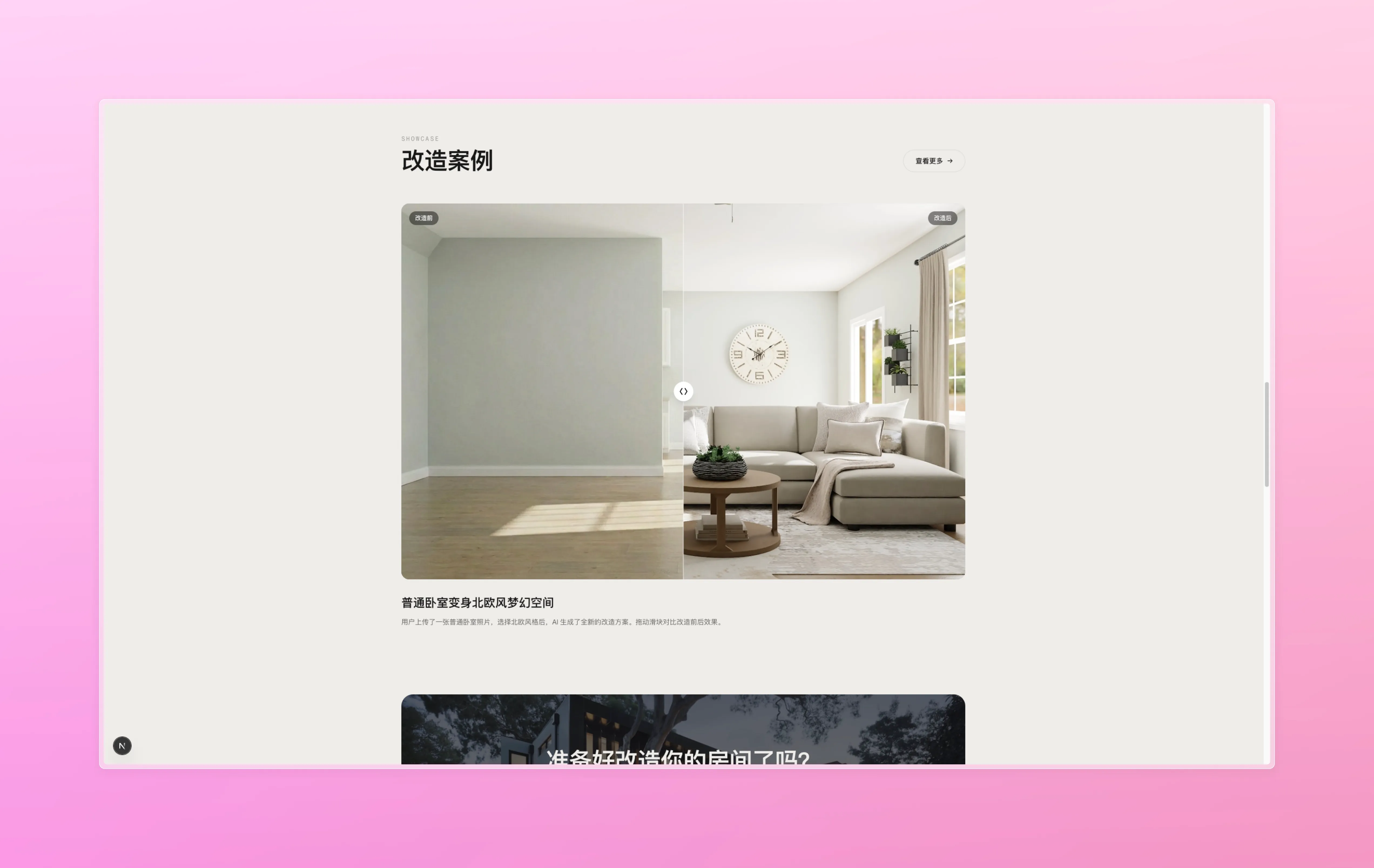

Before and after

The input is always a real photograph — phone pics, messy lighting, skew, whatever. Roomet handles them because Gemini 3 Pro Image is doing the heavy lifting.

And here’s the same room after Roomet’s first pass, with a draggable before/after slider in the real UI:

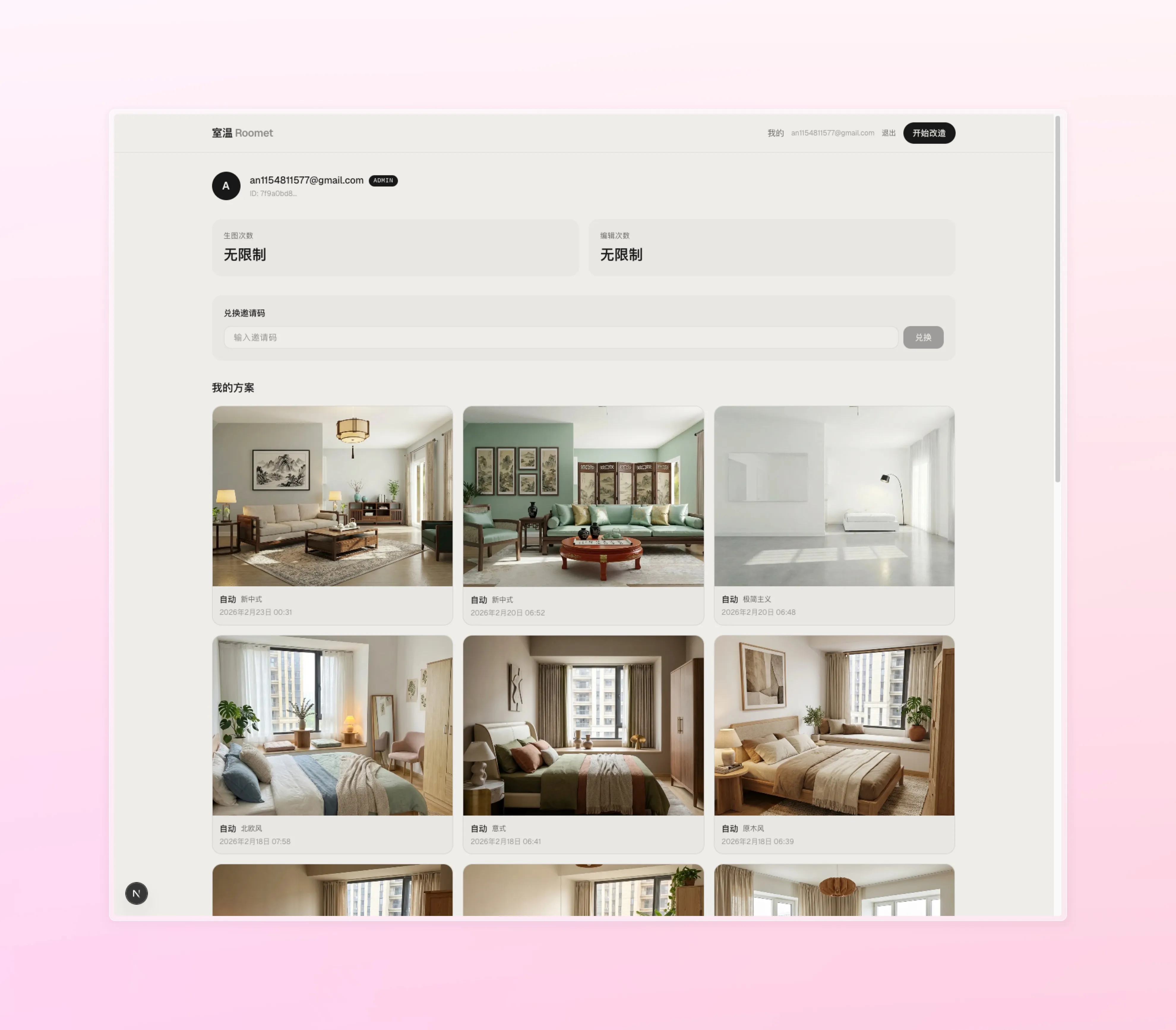

The app

Three screens carry the whole experience: a landing page, the create/configure flow, and the editor.

User flow

The /create route walks you through upload → configure → watch the

shimmer animation → pick one of three variants. Picking a variant

jumps you to /edit/[variantId] where the real interaction happens.

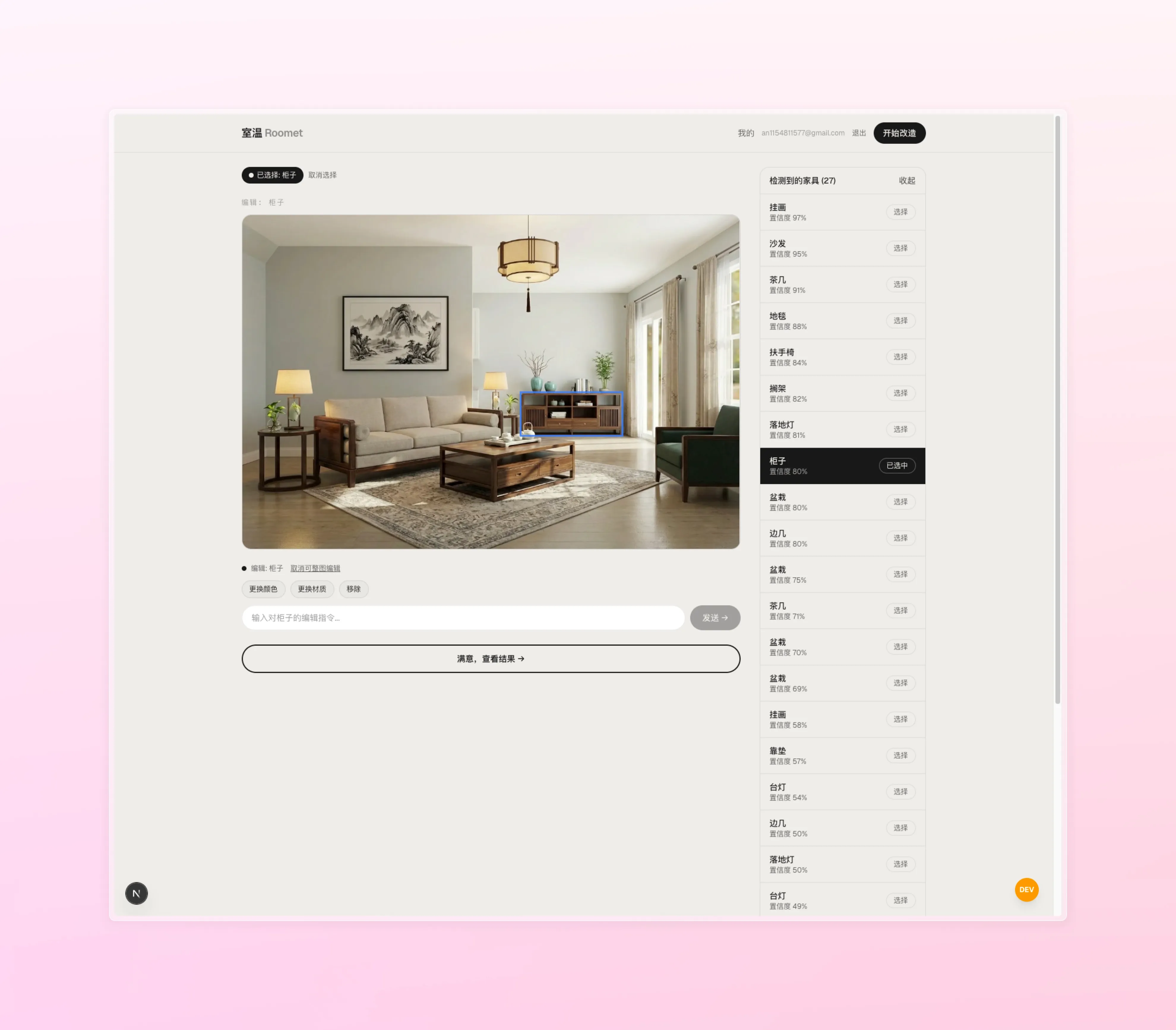

Furniture-level editing

The editor is the heart of the product. Hover any piece of furniture

and a SegmentOverlay highlights it (YOLO-World + Gemini segmentation

on the backend); click to select it and the bottom bar turns into a

furniture-specific prompt input. Type “make this sofa cream linen”

and only that sofa changes, leaving the rest of the room untouched.

If nothing is selected, your prompt applies to the whole scene.

Version history

Every edit produces a new version, shown as a thumbnail strip along the bottom of the editor. Click any older version to roll back without losing the later ones — the history is a DAG, not a linear undo stack. Good enough to experiment fearlessly.

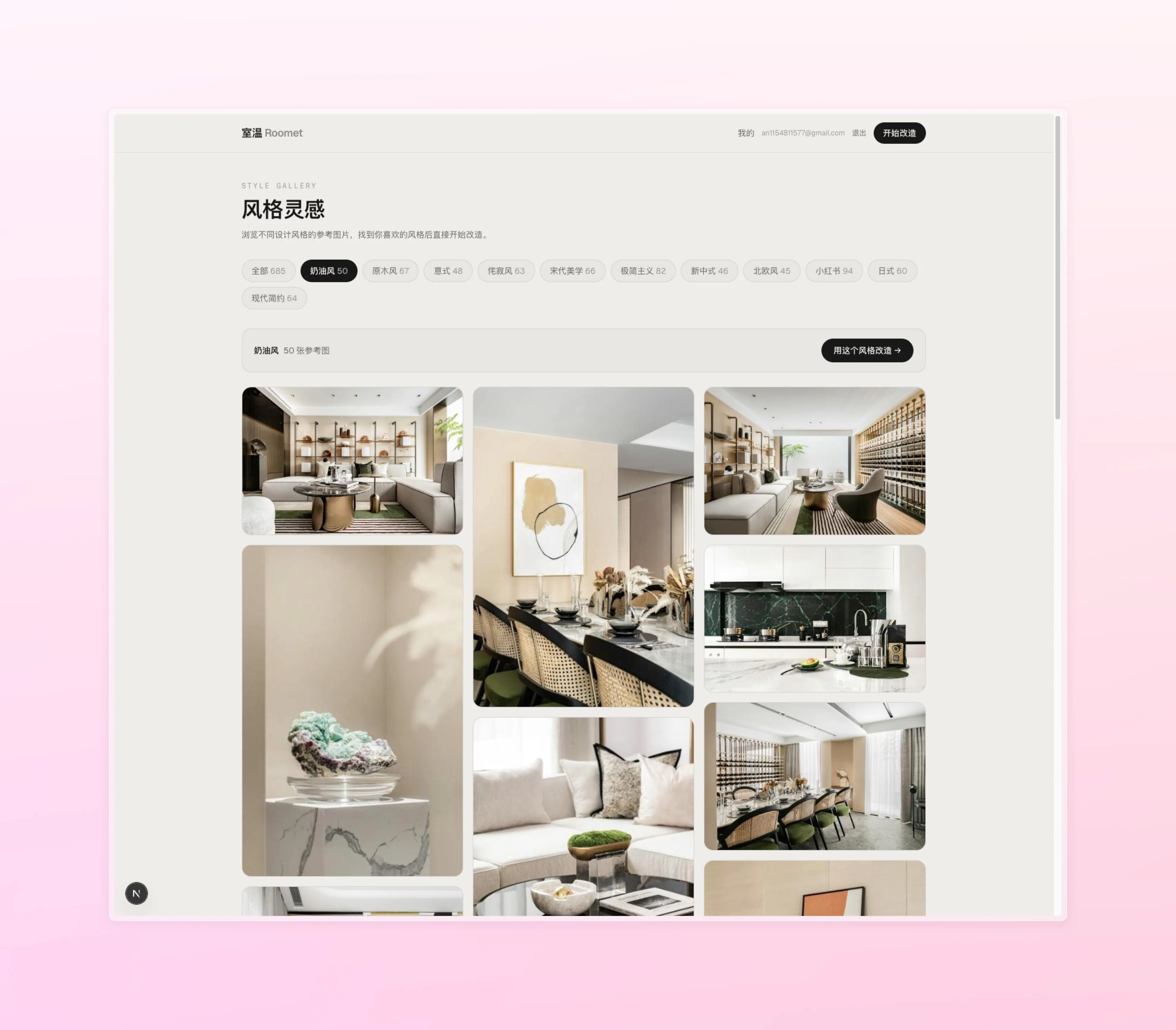

Inspiration gallery

A separate gallery feed streams curated reference rooms grouped by style tag. Tap one to copy its style onto your own room.

Architecture

Frontend (Next.js 15, React 19, TypeScript, Tailwind v4):

- App Router with four top-level routes:

/,/create,/edit/[variantId],/scene/[id](legacy redirect) - Canvas overlay for segmentation (

SegmentOverlay.tsx) handles bounding-box hit testing and mask highlighting BeforeAfterSlider,StyleCard,PillSelector,ProductQueriescompose the rest of the UI- API client polls the backend for long-running jobs via

pollJob()inlib/api.ts - Next.js rewrites proxy

/api/*and/uploads/*to the local FastAPI backend so the browser never talks to Gemini directly

Backend (FastAPI + Gemini + arq):

- FastAPI API with endpoints for scenes, variants, jobs, gallery, and segmentation

- Heavy generation runs in arq workers (Redis-backed task queue) so HTTP stays snappy; graceful fallback to Python threading when Redis isn’t available

- Gemini 3 Pro Image for generation and inpainting-style edits; YOLO-World for on-demand furniture segmentation

- Supabase PostgreSQL with SQLAlchemy — connection pooling, versioned variant history, job status

- Two-tier image cache: Redis (24h TTL) in front, in-memory LRU (~20 slots) on the API instance

- Chat-session-based Gemini calls so multi-turn edits on the same variant stay context-aware

Notable bits

- Furniture-aware editing — segmentation + inpainting lets you edit one object at a time instead of regenerating the whole image for every change. It’s the single difference that makes Roomet feel like a tool instead of a slot machine.

- Three variants by default — one generation call produces three stylistically different takes so you pick from a real menu, not “try again until it’s right”.

- Version DAG, not a linear undo — every edit branches off its parent. You can always roll back and take a different direction without losing the timeline you already explored.

- Smart backend fallbacks — no Redis? threading worker. Gemini rate-limited? retries with exponential backoff. YOLO-World model not loaded? lazy-load it on first segmentation request.