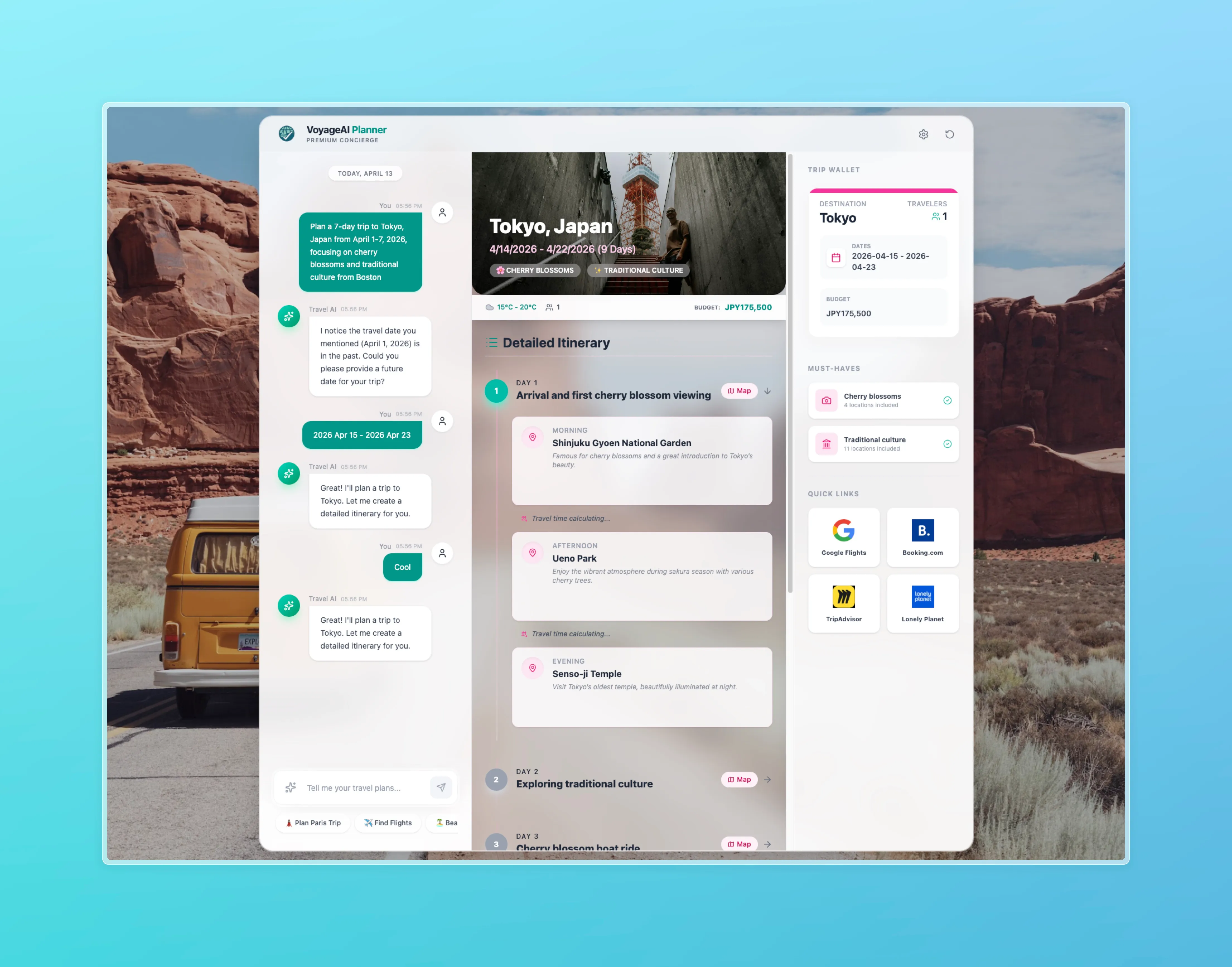

VoyageAI Planner

A multi-agent, LangGraph-powered AI travel companion — built around a security-first, self-correcting workflow.

- Type

- Web

- Role

- Contributor

- Status

- Active

- Tech

- LangGraph LangChain FastAPI Server-Sent Events OpenAI GPT-4 React 19 Vite TypeScript Tailwind v4 TanStack Query

- Started

- Sep 2025

- Ended

- Dec 2025

INFO 7375 · Final Project · Group 6 — Northeastern, Fall 2025.

Overview

VoyageAI Planner turns a single natural-language request like “Plan a 3-day honeymoon to Paris with $2000” into a validated, day-by-day itinerary.

Under the hood, a LangGraph state machine orchestrates

specialized agents — a coordinator, a task decomposer, a tool-using

judge, a synthesizer, and a validator — that can retry, refine, and

self-correct before anything reaches the user. The frontend is a

React chat UI streaming updates in real time via Server-Sent

Events; the backend is a FastAPI service that exposes a single

/chat/stream endpoint powering the whole experience. The constraint

parser is bilingual — the same pipeline extracts destination,

duration, budget, and trip type from both English and Chinese

natural-language requests.

System Architecture

The full graph flow (from an incoming user message to a validated itinerary):

Start

↓

Security Check (Guard — blocks prompt injection / off-topic)

↓

Extraction Info ────→ Reject

↓

Intent Recognition

↓

[ Plan | Refine | Chat ] ────→ Reply (for Chat branch)

↓

Planner → Coordinator (Loop) → Refine Plan

↓

┌───────────────────────────────────────┐

│ AGENT LOOP · TOOL USE + SELF-JUDGE │

│ Agent → Tools → Judge → (loop) │

└───────────────────────────────────────┘

↓

Synthesize

↓

Validate (Retry on constraint violation)

↓

Reply👉 Full interactive architecture diagram (standalone HTML).

What We Did Well

Security-first gate. A dedicated Security Check node sits at

the entry point, filtering prompt-injection and off-topic requests

before any LLM planning work runs — saving tokens and preventing

misuse.

Intent router. One endpoint, four behaviors: plan, refine,

chat, or reply. The Intent Recognition node routes each message

to exactly the sub-graph it needs — no wasted compute on simple small

talk.

Self-correcting agent loop. Tool → Judge → Tool. The Judgement Agent decides whether a sub-task is complete or needs another tool call, with a hard retry ceiling and a fallback path — no infinite loops, no silent failures.

Plan validation node. Every synthesized itinerary passes through a Validator that checks duration, budget, and structural integrity against the original constraints before it ever reaches the user.

Refine, don’t rebuild. A dedicated Refine Plan branch lets users

tweak an existing itinerary (“make day 2 cheaper”) without re-running

the full pipeline — faster and much lower cost.

Streaming UX over SSE. The frontend shows live node progress —

coordinator, judge, synthesize — so users see the agent

thinking instead of staring at a spinner.

Clean, modular graph. Nodes, routers, core state, and utils live in separate packages. Adding a new capability is a matter of registering one node — no tangled monolith.

Bilingual constraint parsing. LLM-powered normalization extracts destination, duration, budget, and trip type from both English and Chinese natural-language requests with the same pipeline.

Stack detail

- Agent: LangGraph + LangChain + OpenAI GPT-4. Nodes are

registered as Python modules under

travel_planner/with separate packages for routers, core state, and tool adapters. - API: FastAPI with a single

/chat/streamServer-Sent Events endpoint that multiplexes node events back to the frontend. - UI: React 19, Vite, TypeScript, Tailwind v4, TanStack Query, and Lucide icons. The chat UI subscribes to the SSE stream and renders each agent node’s progress inline.

My contribution

Group 6 project — specific contribution notes go here.